Understanding Training Output

This is part of a series on ML for generalists, you can find the start here.

This is the output I get from our train.py on my machine, an aging Intel Mac:

This is part of a series on ML for generalists, you can find the start here.

This is the output I get from our train.py on my machine, an aging Intel Mac:

This is part of a series on ML for generalists, you can find the start here.

This is the exciting bit, we're going to train our model using the dataset we generated earlier.

There's a lot of code but don't be intimidated, about half is output to let us watch the training process a little more closely. This is pretty common when you're designing and building (and troubleshooting) models.

If you've made it this far, you've understood the most difficult concepts. This is a bit verbose, that's all.

Read more...

This is part of a series on ML for generalists, you can find the start here.

ReLU or Rectified Linear Unit, keeps the positive values from a layer's output and replaces the negative values with zeros. So if one of our convolutional layers had -0.5 somewhere in its output, the ReLU step would turn that to 0. If it outputted 2.5, that stays 2.5.

It's a simple step but fundamental to making our model actually work. The diagrams on the ReLU wikipedia page are so intimidating, but the principle really is that simple.

Why does it matter? We need non-linearity for our model to learn.

Read more...

This is part of a series on ML for generalists, you can find the start here.

A quick recap of the components in our model.

import torch

import torch.nn as nn

class OrientationModel(nn.Module):

def __init__(self):

super().__init__()

self.conv = nn.Sequential(

nn.Conv2d(in_channels=1, out_channels=32, kernel_size=3, padding=1),

nn.ReLU(),

nn.MaxPool2d(kernel_size=2, stride=2),

nn.Conv2d(in_channels=32, out_channels=64, kernel_size=3, padding=1),

nn.ReLU(),

nn.MaxPool2d(kernel_size=2, stride=2),

nn.Conv2d(in_channels=64, out_channels=128, kernel_size=3, padding=1),

nn.ReLU(),

nn.MaxPool2d(kernel_size=2, stride=2),

nn.Conv2d(in_channels=128, out_channels=128, kernel_size=3, padding=1),

nn.ReLU(),

nn.MaxPool2d(kernel_size=2, stride=2),

nn.Conv2d(in_channels=128, out_channels=128, kernel_size=3, padding=1),

nn.ReLU(),

nn.MaxPool2d(kernel_size=2, stride=2),

)

self.flatten = nn.Flatten()

self.fc = nn.Sequential(

nn.LazyLinear(out_features=64),

nn.ReLU(),

nn.Linear(in_features=64, out_features=2),

)

def forward(self, x: torch.Tensor) -> torch.Tensor:

x = self.conv(x)

x = self.flatten(x)

x = self.fc(x)

return x

This is part of a series on ML for generalists, you can find the start here.

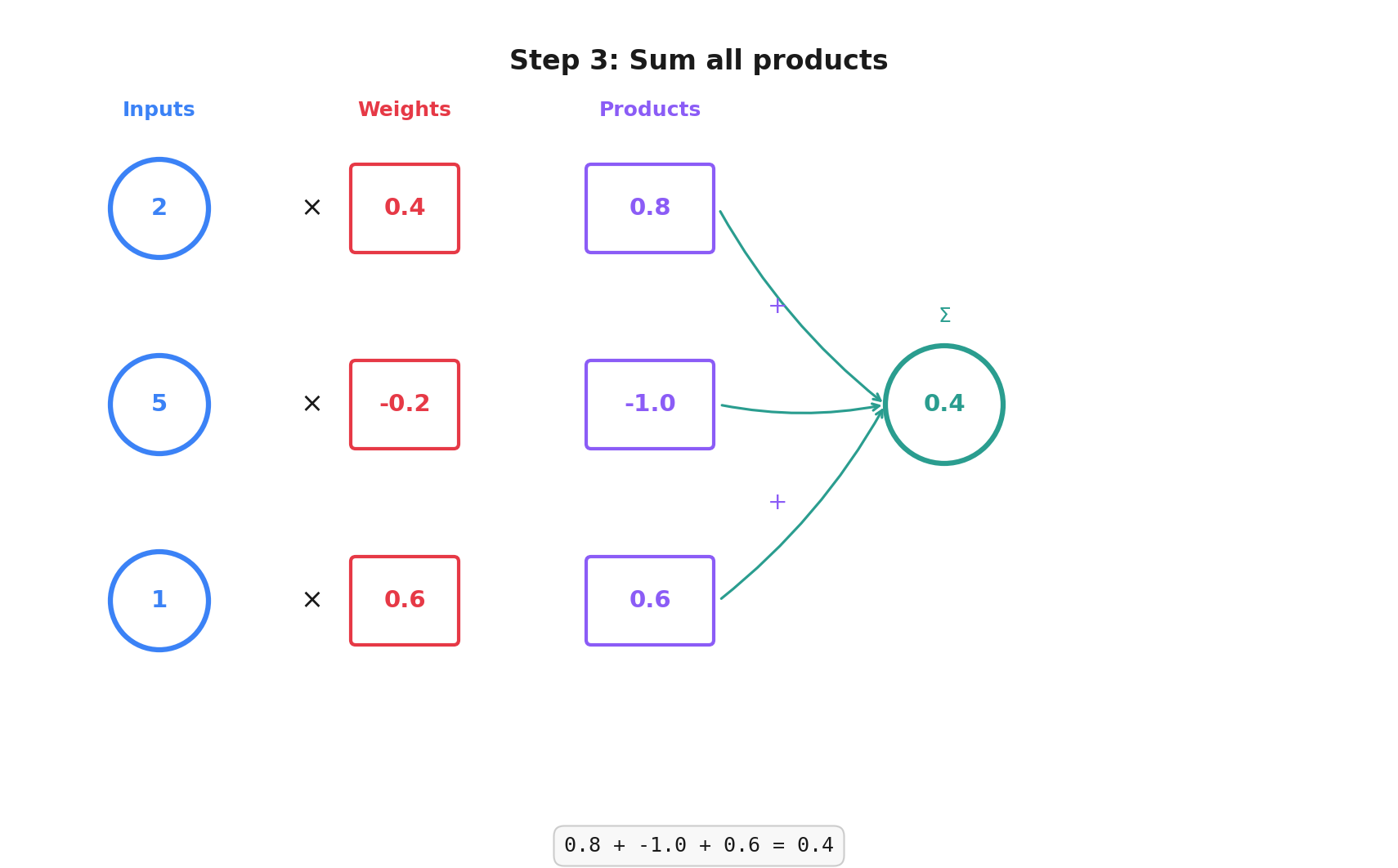

Fully connected layers are the oldest part of our network. They're made up of perceptrons, the simplest possible neural network unit. They were invented in 1957 by Frank Rosenblatt.

This is part of a series on ML for generalists, you can find the start here.

The core ideas behind Convolutional Neural Networks (CNNs) go back to the late 1980s.

Yann LeCun published the foundational paper in 1989. This is the same Yann LeCun that was Meta's chief AI scientist from 2013 to 2025, leaving because he believes LLMs are a dead end towards "superintelligent" models.

Have fun, Yann.

Read more...

This is part of a series on ML for generalists, you can find the start here.

We're at the fun bit, where we decide on our model architecture and build out the layers.

The first surprise is just how little code this takes.

Read more...

This is part of a series on ML for generalists, you can find the start here.

We'll put everything in train.py for now, to keep things simple.

PyTorch expects our data to live inside a Dataset, a list-like container where it can access individual training samples. We'll wrap our answersheet.json and the images it points to in a custom OrientationDataset class.

This is part of a series on ML for generalists, you can find the start here.

We need images to train our model, so let's create some synthetic data. We'll need images rotated at varying degrees and we'll also need our ground truth, the correct answer: how many degrees we rotated the image by.

Without the ground truth, we can't tell our model how wrong its predictions are so we can't train it and improve its answers.

You can let your eyes glaze over for this code, it's not important, it just gets us a dataset we can work with:

answersheet.json with the ground truth (correct answer) rotation for each image

This is part of a series on ML for generalists, you can find the start here.

I'm a fan of uv from Astral for managing Python projects. It takes care of package management, Python versions and virtual environments. Use whatever you prefer, but the commands below assume uv.